Initiated in the 1940s, the advent of digital computing machinery laid the foundational framework for executing intricate algorithms that solve complex mathematical theorems or engage in strategic board games like chess. This capability transcends conventional computational tasks, morphing into what is widely recognized as artificial intelligence. The discourse elucidates the core tenets of artificial intelligence, its multifaceted applications, and the methodological roadmap requisite for constructing an intelligent computational entity. Additionally, In this blog, we will cover everything about developing an AI system.

What is AI?

Artificial Intelligence (AI) remains a concept widely circulated, yet its intricacies are frequently misconstrued. Situated within the domain of computer science, AI aspires to engineer software equipped with cognitive faculties, enabling it to execute functions traditionally necessitating human intellect.

Contrary to cultural depictions in works like HAL or Terminator, the realm of AI tilts more towards empirical data analytics rather than fantastical narratives. The objective is to augment computational paradigms with human-like cognition, but the current state of technology remains tangibly distant from the sensationalized portrayals in popular media.

Want to learn more about AI and its impact, read 30 interesting AI facts.

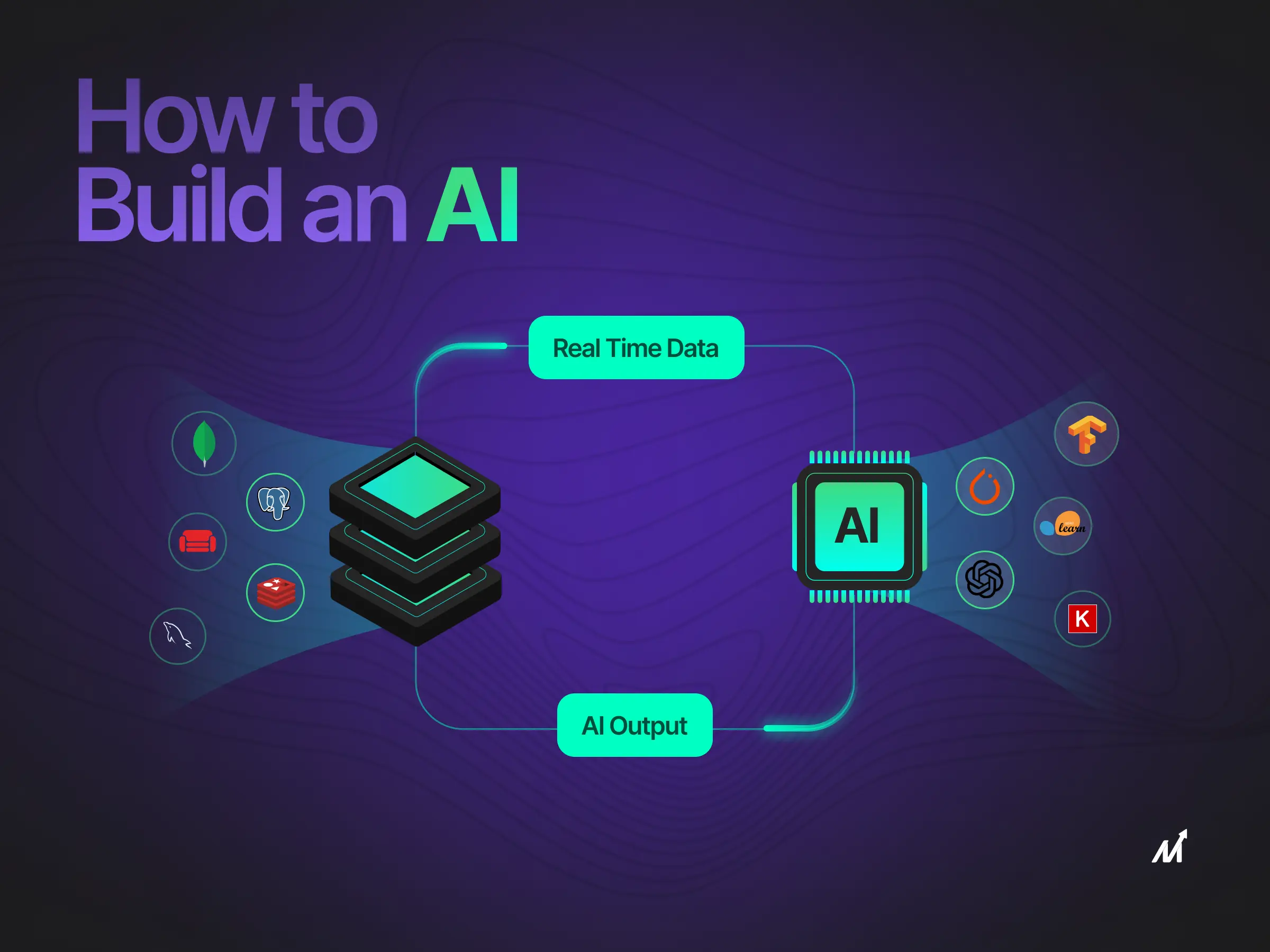

There are three foundational categories that warrant scrutiny before developing an AI system for an incisive grasp of AI. These categories comprise:

Categories of Artificial Intelligence Systems

There are three foundational categories that warrant scrutiny before developing an AI system for an incisive grasp of AI. These categories comprise:

1. Specialized Artificial Intelligence (SAI)

Focused on accomplishing a singular operation, Specialized Artificial Intelligence embodies the category commonly termed ‘weak AI.’ For instance, machine learning models specializing in sentiment analysis, computer vision algorithms aimed at image recognition, or decision trees in autonomous vehicles fit this classification. These systems excel in a confined domain but remain insular in their capabilities, diverging significantly from multifaceted AI constructs portrayed in speculative fiction.

SAI mechanisms like Amazon’s Alexa, Apple’s Siri, or GPT-4 operate within the boundaries of a specific computational function. Their prowess is limited to their predefined operational sphere.

2. Generalized Artificial Intelligence (GAI)

Contrastingly, Generalized Artificial Intelligence aspires to emulate human-like cognitive functions. This concept, often termed ‘strong AI,’ envisions a machine’s ability to adapt and excel in various intellectual activities. Currently, theoretically, no extant AI model demonstrates this cognitive adaptability and analytical proficiency level.

Engineers and data scientists invest concerted efforts in advancing towards GAI. Nevertheless, the feasibility of creating such a multifaceted AI framework remains a substantial academic contention.

3. Hyper-Intelligent Artificial Systems (HIAS)

Surpassing even Generalized Artificial Intelligence, Hyper-Intelligent Artificial Systems (HIAS) exist largely in conceptual frameworks. HIAS would outperform human intellect across an exhaustive range of disciplines in a hypothetical landscape, from complex problem-solving to emotional intelligence.

Though a recurring theme in speculative literature, attaining hyper-intelligence remains an elusive target; given the challenges faced in the development of GAI, the transition to a HIAS state represents an insurmountable leap with our present technological infrastructure.

Traditional Systems Vs. AI System: A Technical Dissection

1. Data-driven vs. Rule-based

Explicit rules guide a system’s behavior in conventional programming. Developers create code that precisely follows the rules to accomplish a predetermined goal. The system’s explicit programming leaves little to no flexibility for the software to adapt or learn, as each function and activity inside it. In contrast, developing an AI system involves leveraging data-driven approaches. AI systems use machine learning algorithms to ingest massive amounts of data, recognize patterns, and learn how to carry out tasks or make choices. These algorithms improve operational functionality by upgrading themselves depending on fresh input.

2. Dynamic vs. Static

The operational behavior of traditional programming structures is strictly determined at the construction time, making them static by nature. Any capacity to adjust to changing conditions requires direct human participation, frequently modifying code or upgrading systems. Developing an AI, however, involves creating systems that exhibit dynamic behavior. AI systems are built to automatically adjust to changing circumstances, environmental alterations, or data stream variations. Because of this, AI is perfect for applications requiring instantaneous flexibility and decision-making, eliminating the need for ongoing manual supervision.

3. Black Box vs. Transparent

The decision-making processes in traditional programming models are typically visible, making them easy to check for consistency and rationality. Each line of code has a specific function, and the logic follows a predictable path, making it simple to debug problems or confirm results. In particular, machine learning algorithms and neural networks function as a “black box” in developing an AI. The fundamental workings of decision-making are not as easily interpretable even though these algorithms can produce extremely precise and complex outputs. This presents difficulties in situations that need openness and audibility, such as healthcare diagnostics or legal decision-support systems.

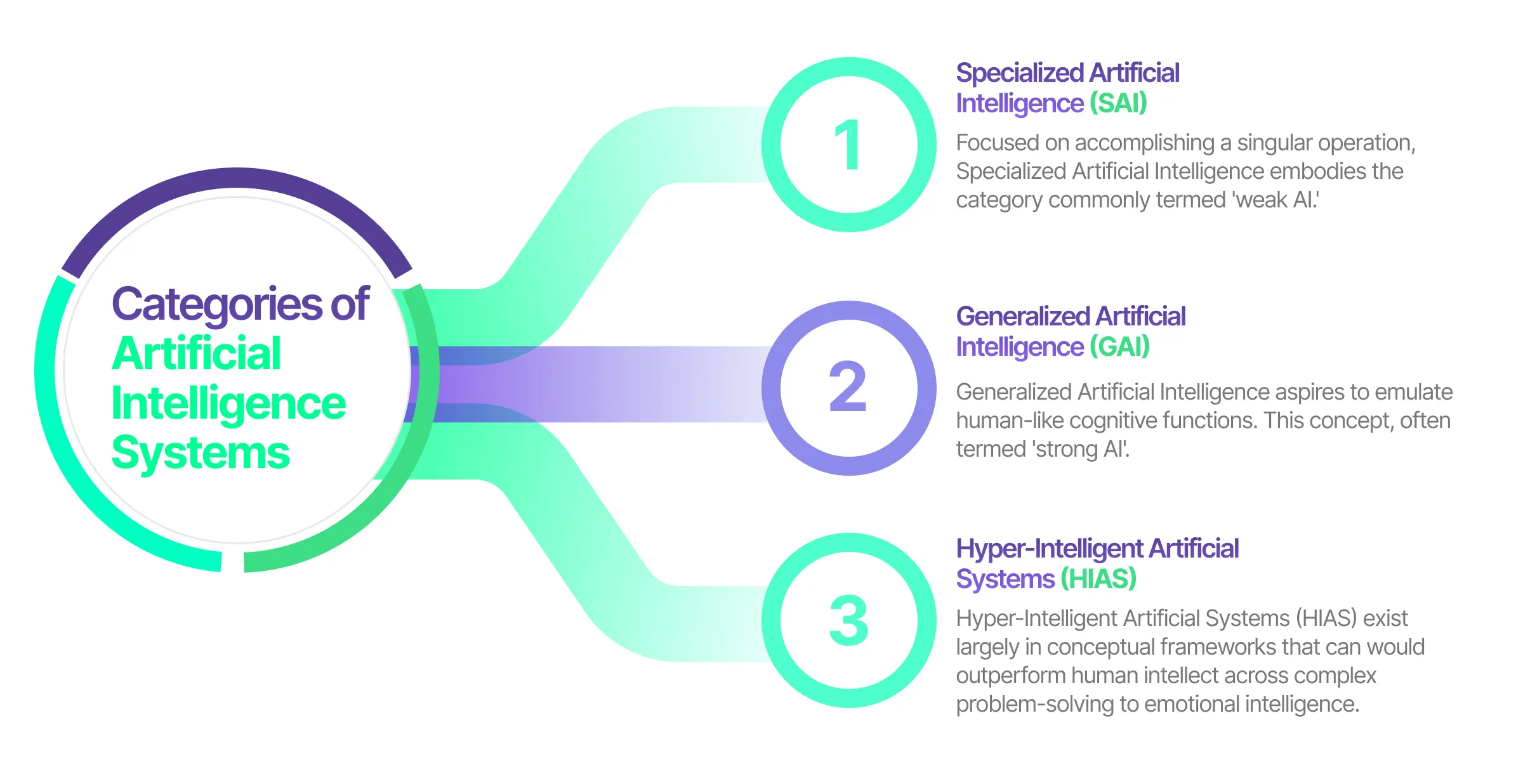

Developing an AI System: Key Prerequisites

When contemplating the construction of a sophisticated artificial intelligence system, several crucial elements need to be meticulously orchestrated for developing an AI system. Below are the requisite building blocks that delineate the anatomy of an AI system:

-

Data Sourcing: Paramount to the architecture of an AI system is the quality and comprehensiveness of the data ingested for model training and validation. Data also serves as a base for deep learning. This could be sourced from multiple repositories such as relational databases, IoT sensors, or even web scrapers aggregating information from the digital landscape.

-

Algorithmic Foundation: Algorithms act as the cognitive scaffolding on which AI systems are erected. These are typically built utilizing machine learning frameworks or deep learning methodologies. They aim to instruct the AI model in data interpretation, allowing it to extrapolate insights, make predictive analyses, or execute informed decisions.

-

Technological Infrastructure: The substrate that enables an AI model’s creation, training, and real-time functioning comprises a blend of hardware and software assets. Within the hardware ecosystem usually consists of a network of CPUs and GPUs to handle complex computational tasks. On the AI software side, a balanced combination of operating systems and specialized frameworks like TensorFlow or PyTorch plays an instrumental role.

-

Domain-Specific Expertise: The endeavor to construct a high-caliber AI system is significantly amplified by the involvement of domain experts. Specializations in data science, machine learning, natural language processing, or computer vision, among other disciplines, contribute to refining the technical nuances of the system. Collaboration with or recruitment of individuals with high levels of expertise can greatly accelerate the success trajectory of AI projects.

(Learn more about: Fine tuning LLM)

Programming Language Used to Build AI

Selecting the ideal programming language is a pivotal decision when developing an AI system. This choice is influenced not only by the language’s inherent capabilities but also by its alignment with AI development needs.

In the space of AI, a constellation of programming languages shines, each offering unique strengths. Developers choose these languages for their inherent compatibility with AI’s demands, enriching them with AI-centric functionalities, or because they form the nucleus around which a vibrant community has emerged, crafting tools and resources for AI development.

1. Python: A Multifaceted Protagonist

Python’s reputation as a leading programming language is well-deserved. It is an interpreted, all-purpose language celebrated for its simplicity, legibility, and an extensive array of packages, libraries, and frameworks. In the AI arena, Python distinguishes itself with a myriad of specialized tools. Moreover, one notable example is PyTorch, a machine learning framework with a user-friendly interface grounded in Python (alternatively, C++ for those seeking a challenge). Python’s dominance in data science is a testament to its versatility and widespread acceptance.

2. Julia: The New Vanguard

Julia, the newest entrant in this field, was meticulously crafted to be a data science powerhouse. It addresses many of the shortcomings found in other languages, boasting less syntactic complexity than Java or C++ and surpassing the speed of Python or R. As a language, it is gradually carving a niche in the data science sphere, marking itself as a language to watch for those interested in cutting-edge technologies.

3. R: The Academic Pillar

Before Python’s ascent, R was the undisputed leader in data science. Originating as an open-source variant of the S language, it has long been a staple in academic circles. R’s user experience may be challenging, yet its rich collection of libraries, deeply rooted in scientific research, remains unparalleled.

Other languages, such as Scala, Java, and C++, also play significant roles in AI development. These languages are renowned for their widespread use, both within and beyond software engineering. Their appeal lies in their robust performance and the maturity of their ecosystems.

Overall, the choice of programming language for AI hinges on various factors, including ease of use, community support, speed, and the availability of specialized libraries and frameworks. The evolution of these languages continues to shape the AI landscape, offering a diverse toolkit for developers and researchers in this ever-evolving field.

Defining Artificial Intelligence Algorithm

What precisely constitutes an artificial intelligence (AI) algorithm? Algorithmic logic is the backbone of mathematical computations and computational operations in computer science. Distilled to its essence, an AI algorithm is a computational blueprint that instructs a machine in autonomous learning and decision-making.

AI algorithms deviate significantly from rudimentary algebraic equations. They orchestrate them through intricate rule sets, shaping the machine’s procedural steps and capacity for self-improvement. Absent such algorithmic frameworks, artificial intelligence would remain purely conceptual.

Operational Mechanics of AI Algorithms

While traditional algorithms may exhibit straightforward operational dynamics, AI algorithms thrive in complexity. Central to the function of an AI algorithm is the intake and assimilation of training data, serving as the algorithm’s educational foundation. This data’s source, categorization, and labeling distinguish one AI algorithmic approach from another.

At its operational core, an AI algorithm ingests training data—whether labeled or unlabeled, developer-supplied, or autonomously collected—and uses it as a basis for learning and task execution. Some AI algorithms employ self-learning mechanisms capable of incorporating novel data to refine and adapt their operational methods. Conversely, other algorithms necessitate manual calibration by software engineers for optimal performance tuning.

In summary, an AI algorithm is a complex orchestration of rules, enabling machines to perform tasks autonomously by learning from data. It serves as the engine and the steering wheel of an AI system, driving its ability to learn, adapt, and execute tasks. Whether self-sufficient in their learning or requiring external intervention for refinement, AI algorithms remain the cornerstone of artificial intelligence, shaping its capabilities and evolution.

Process of Developing an AI

Projected to amass a global revenue of $62.5 billion in 2022, AI technology is undeniably at the forefront of technological evolution. The question is: How does one develop a sophisticated AI system or AI software from ground zero? The procedure can be broken down into several iterative steps, encapsulated below.

Step 1: Defining the Problem Space for AI Application

Before initiating any product development, it is crucial to place critical emphasis on identifying the issues that end-users grapple with. Recognizing these challenges forms the foundation for delineating a value proposition—a commitment to deliver value. Furthermore, post development of the initial product iteration, or Minimal Viable Product (MVP), a thorough vetting is indispensable for promptly rectifying any existing glitches.

Step 2: Curating and Refining the Necessary Data to Developing an AI

Once the problem framework is distinctly outlined, the next step is to select the optimal data sources. Prioritizing high-fidelity data acquisition overshadows the enhancement of the AI model’s capabilities. Data can generally be segregated into two subsets:

-

Structured Data: This data category is characterized by well-defined information, recognizable patterns, and readily searchable parameters. This could include names, addresses, and other categorically aligned data points. This is very much needed for Generative AI.

-

Unstructured Data: Contrasting structured data, this category lacks uniformity and consistent patterns, comprising audio files, graphical images, and other similar content forms.

After acquiring the necessary data, we must undertake meticulous cleaning procedures to mitigate errors and omissions, thereby amplifying the data’s quality.

Step 3: Algorithmic Framework Design for Developing an AI

Translating the predefined problem into computational steps necessitates the construction of specialized algorithms. In the context of AI, these are often machine learning algorithms aimed at prediction or classification tasks. Consequently, these mathematical recipes empower the AI system to decipher patterns within the dataset, enabling learning.

Step 4: Training and Optimization of Algorithms (Training Data)

With the algorithmic framework, the next phase calibrates using the amassed high-quality data. Subsequently, the algorithm must undergo training iterations to optimize its performance metrics. In scenarios requiring high precision, supplementary data may be necessary to achieve desired levels of model accuracy.

The ultimate objective during this stage is the establishment of a minimum acceptable performance threshold. For instance, in a social networking application aimed at flagging fraudulent accounts, the system could assign a ‘fraudulence index’ ranging from zero to one to each user account. Upon comprehensive evaluation, the system could escalate accounts surpassing a threshold of 0.9 for manual verification by a specialized fraud prevention team.

Stage 5: Platform Selection Strategy to Developing an AI

When architecting an artificial intelligence system, the decision regarding the computational environment is non-negotiable. Two primary paradigms exist: proprietary frameworks and cloud-based services. Each boasts distinct advantages and trade-offs.

Proprietary Frameworks

Scikit-Learn, TensorFlow, and PyTorch are standard choices for internal model development. Deploying models in an in-house infrastructure allows a company granular control over resources and data privacy. However, this approach necessitates adequate hardware capabilities and entails overhead for maintenance.

Cloud-based Services

Leveraging machine learning within the cloud accelerates the process from experimentation to production. Platforms offering machine learning (MLaaS) enable swift model training and deployment. Moreover, integrated development environments (IDEs) and platforms like Jupyter Notebooks seamlessly support, thereby reducing the barriers to entry for model development and deployment.

Stage 6: Programming Language Articulation

The choice of programming language influences the software layer that serves as the cornerstone of any AI system. Languages like C++, Java, Python, and R each contribute uniquely to the objectives and constraints of the AI system.

-

Python is a gateway for novices due to its straightforward syntax and robust machine-learning libraries. It is often the first choice for data manipulation and algorithm prototyping.

-

C++ excels in scenarios demanding high performance, such as real-time analytics or AI in gaming. Its superior speed and efficiency make it a suitable choice for these applications.

-

Java features a range of attributes like ease of debugging and platform independence. Large-scale enterprise applications frequently employ it, finding it well-suited for search engine algorithms.

-

In data science endeavors, R serves as a favored tool for statistical computation and predictive analysis.

Stage 7: Implementation and Continual Oversight to Developing an AI successfully

Upon reaching a state of operational viability with the AI model, the next logical progression is deployment. Subsequently, ensuring continuous monitoring post-deployment is critical for maintaining system integrity and performance. Regular oversight prevents model drift and provides insights into anomalies or unexpected behaviors. Immediate remedial action can mitigate operational risks.

How Markovate can help in Developing an AI?

At Markovate, we believe that the journey towards crafting a high-performance AI system isn’t just a series of technical checkpoints; it’s an evolving narrative that we tell along with our clients. To illustrate, let’s pull back the curtain on how we go about this fascinating AI software development process.

When it comes to data—often called the lifeblood of AI—we don’t just collect it; we treat it with the respect it deserves. We pour over datasets, weed out the noise, and enrich the meaningful signals. This hands-on, almost artisanal approach ensures that when our AI models learn, they learn from the best.

We’re not just a group of AI developers; we’re a community of passionate AI developer specializing in various domains like machine learning, natural language processing, and computer vision and offering AI as a service. This intersection of technical prowess and expertise allows us to fine-tune algorithms that are not just cutting-edge but also incredibly relevant to specific business challenges. We collaborate, brainstorm, and sometimes, we even disagree, but that’s all part of what makes our AI solutions – whether conversational AI, AI chatbot, or AI application, so robust.

We’re not in the business of cutting corners. We deploy a state-of-the-art hardware and software environment that gives our AI models the space to stretch their legs, computationally speaking. With an optimal ecosystem, our AI models can work through intricate calculations and voluminous data with agility and accuracy. So, what’s the total of all this? At Markovate, we make AI development less of a transaction and more of a partnership.