LangChain is an innovative framework designed to unlock the full potential of large language models, enabling developers to build powerful LLM applications with ease. By providing a robust set of tools and interfaces, LangChain streamlines the process of working with state-of-the-art machine learning systems while maintaining flexibility across various programming languages.

In this blog post, we will delve into the key features and components that make LangChain stand out as a groundbreaking solution for leveraging language models. Next, we’ll explore its abstraction capabilities, generic interfaces for foundation models, and how it integrates PromptTemplates and external data sources. Furthermore, we’ll discuss the advantages of using Hugging Face Hub or OpenAI GPT-3 within the LangChain ecosystem.

As we progress through this comprehensive guide on LangChain’s offerings, you’ll gain valuable insights into building applications with SimpleSequentialChains and memory persistence between calls. Lastly, we will cover agent evaluation techniques and optimization strategies to ensure optimal performance in your projects powered by this cutting-edge framework.

LangChain Framework Overview

LangChain Features:

The LangChain framework is an open-source solution designed to simplify the development of Large Language Model (LLM) powered applications, making it easier to build AI solutions. LangChain’s architecture incorporates several intricate modules that are integral for the seamless execution of Natural Language Processing (NLP) applications. In particular, these modules function cohesively to facilitate interactions with language models, handle data logistics, and enable more advanced capabilities.

Model Interfacing

- Previously termed as model I/O, this component functions as the communicative interface between LangChain and various language models. It oversees the regulation of data inputs channeled into the models and systematically retrieves critical data from the generated outputs. As a result, this enables LangChain to interact agnostically with different types of language models and ensure the fluidity of task executions.

Data Management and Querying

- This segment is essential for data logistics, enabling Language Learning Models (LLMs) to interact with databases. The data manipulated by LLMs undergo transformations and are subsequently stored in databases. Consequently, through SQL or NoSQL queries, these datasets can be accessed and retrieved, offering a flexible data manipulation mechanism.

Component Linkage

- Known as Chains, this module is pivotal for scaling the complexities of applications built with LangChain. Indeed, it provides a linkage mechanism that connects multiple LLMs or integrates LLMs with other software components. Moreover, this modular interconnection is colloquially termed an LLM Chain, and it allows for the seamless workflow of more sophisticated tasks within a given application.

Intelligent Command Execution

- Referred to as the Agents module, this feature empowers LLMs to decide the optimum procedures or actions for problem-solving. Specifically, it operates by orchestrating a sequence of intricate directives to LLMs and ancillary tools, ensuring they respond in a manner suited to address specific requests or issues. Therefore, the Agents are essentially the brain of the operation, overseeing and dictating the chain of actions that need to be executed for task completion.

Contextual Memory

- The memory component is responsible for furnishing LLMs with the ability to maintain context across user interactions. Depending on the application’s necessities, both short-term and long-term memory functionalities can be incorporated. Consequently, this enhances the LLMs’ conversational abilities and ensures that they can effectively comprehend and respond to dynamic user inputs across multiple interaction sessions.

Abstraction Capabilities

LangChain’s abstraction features allow developers to harness the power of different language models without worrying about their complexities, creating more robust and efficient AI-powered applications.

Generic Interface for Foundation Models

The framework’s generic interface supports popular language models like OpenAI GPT-3 and Hugging Face Transformers, streamlining the process of integrating them into your projects while ensuring compatibility with future advancements in NLP technology.

PromptTemplates and External Data Sources

LangChain’s PromptTemplates feature allows developers to construct prompts from multiple components, resulting in more accurate responses generated by AI agents based on user inputs or questions.

Streamlined Prompt Creation

With PromptTemplates, developers can create customized prompts that cater to the needs of various applications, achieving greater flexibility and control over the information fed into their AI models.

Integration with External Data Sources

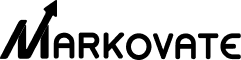

LangChain also supports integration with external data sources like OpenAI GPT-3, enabling AI agents developed using LangChain to deliver highly relevant and context-aware answers based on real-time information available from these sources.

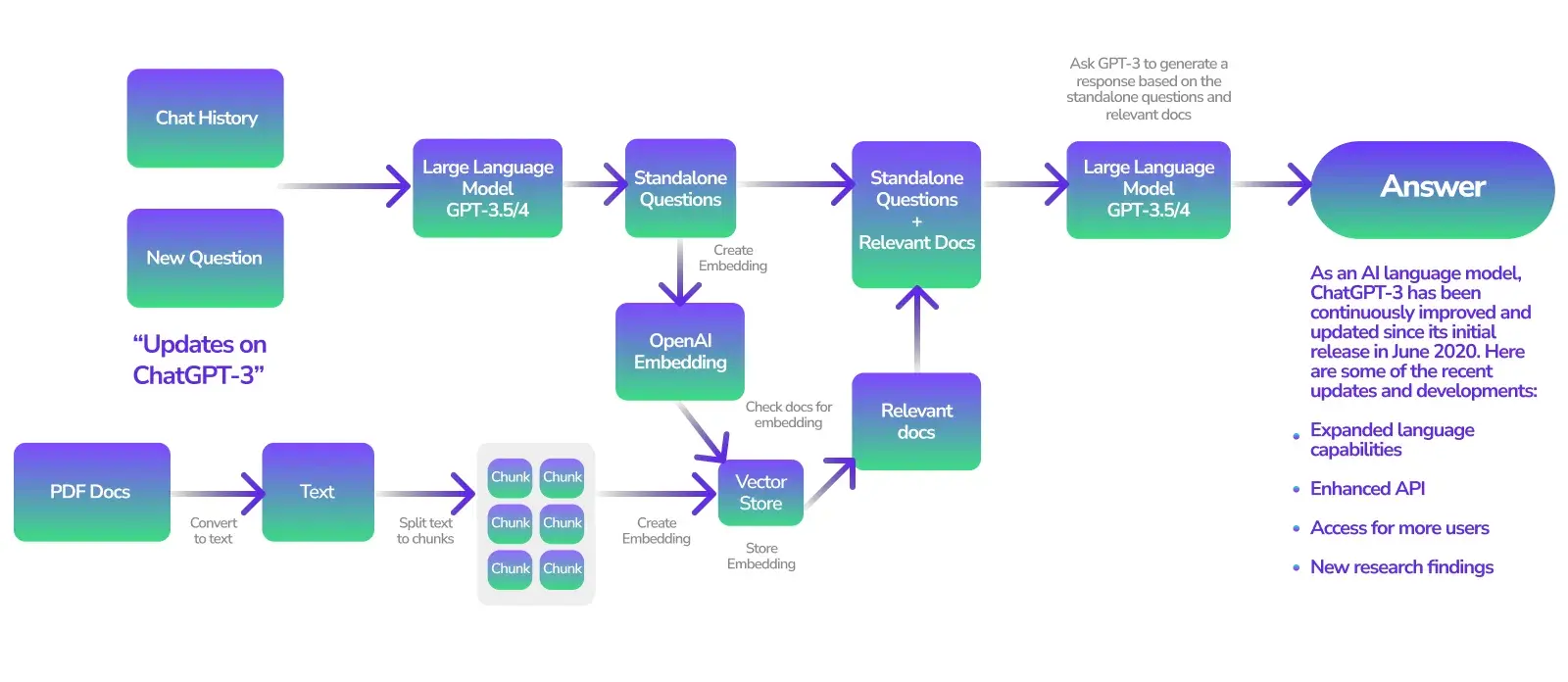

Hugging Face Hub and OpenAI GPT-3 Integration

LangChain supports both Hugging Face Hub and OpenAI’s GPT-3 generation options through its library, giving developers the flexibility to choose between different language model providers depending on their project requirements.

Choose Your Language Model Provider

- Hugging Face: Offers a wide range of pre-trained models suitable for various NLP tasks like text classification, summarization, and translation.

- GPT-3: Known for its powerful natural language understanding capabilities that enable advanced conversational agents and content generation applications.

Advantages of Using Hugging Face Hub or OpenAI GPT-3

Integration with popular LLMs offers numerous benefits. It provides access to state-of-the-art models and continuous updates from their communities. Additionally, it allows for easy switching between providers based on project needs, resulting in more efficient development processes with LangChain.

Building Applications with SimpleSequentialChains

Developers can use SimpleSequentialChains, which are combinations of several chains of operations that run pipelines, within the LangChain package itself, to simplify the process of composing complex systems from multiple components and create powerful question-answer systems.

Composing Complex Systems Using Sequential Chains

With SimpleSequentialChains, developers can build more efficient AI agents that perform tasks such as information retrieval, text summarization, and sentiment analysis by combining different chains in a sequential manner.

Handling Single or Multiple Queries Effectively

SimpleSequentialChains offer flexibility for managing various user inputs effectively. Moreover, they ensure accurate responses from Large Language Models (LLMs) like GPT-3 or Hugging Face models. This applies whether handling a single query or a series of questions.

Memory Persistence Between Calls

LangChain’s memory features ensure state persistence between calls, leading to more accurate, context-aware responses from AI agents.

Benefits of Memory Persistence in LangChain

- Context retention: AI agents can better understand user inputs and provide relevant answers based on past exchanges.

- User experience improvement: Users don’t need to repeat information or rephrase questions, leading to smoother communication and enhanced satisfaction.

Enhancing Conversation Handling with Memory Features

Memory features within LangChain allow developers to easily return valuable information such as recent messages exchanged within conversations. Additionally, these features work seamlessly alongside other components like PromptTemplates and SequentialChains.

Agent Evaluation and Optimization

LangChain provides standardized interfaces for evaluating AI agents developed using generative models, ensuring optimal performance and accuracy in real-world applications.

Standardized Interfaces for Agent Evaluations

Developers can utilize LangChain’s various standardized interfaces, including Question Answering prompts/chains, ‘This’ prompts/chains, and Hugging Face Datasets, to easily assess the quality of their AI agents throughout the development process.

Ensuring Optimal Performance and Accuracy

- PromptTemplates: Use LangChain’s PromptTemplates to customize prompts, ensuring better alignment between user inputs and AI-generated responses.

- Data Source Integration: Integrate external data sources with LLMs for more accurate responses based on information beyond the model itself.

- Evaluation Metrics: Leverage LangChain’s evaluation tools to measure AI agent effectiveness. Assess aspects such as response relevance, coherence, and fluency. This approach ultimately optimizes the agent’s overall performance.

LangChain – FAQs

What is LangChain and how can it help you?

LangChain simplifies the process of language models like Hugging Face and GPT-3 integration into your applications, making it easier to build, evaluate, and optimize AI agents with optimal performance and accuracy.

Is LangChain gaining popularity?

Yes, LangChain is gaining popularity among developers who work with cutting-edge technologies such as AI, ML, Web3, and Mobile, and its adoption is expected to increase as more organizations recognize its benefits in building robust AI-driven applications.

What models are supported by LangChain?

LangChain supports models including OpenAI’s GPT-1, GPT-2, GPT-Neo, DALL-E, and the BERT model from Hugging Face Hub.

What’s the difference between PineCone and LangChain?

While PineCone focuses on vector search engines for similarity-based retrieval of items in large-scale datasets, LangChain is designed specifically for building and optimizing AI agents using generative models like GPT-3 and Hugging Face.

Conclusion

Looking for a powerful framework that provides abstraction capabilities, generic interfaces for foundation models, and memory persistence between calls? Look no further than LangChain.

With PromptTemplates and External Data Sources, constructing prompts and integrating external data sources has never been easier.

Take advantage of Hugging Face Hub and OpenAI GPT-3 Integration to stay ahead of the game in language model provision.

Compose complex systems with ease using SimpleSequentialChains, which allows you to handle single or multiple queries efficiently.

In addition, ensure optimal performance and accuracy with Agent Evaluation and Optimization, which provides standardized interfaces for agent evaluations.